True. I expect ZNN holder participation to be even lower than Pillars. How would we count their vote in a way that does not require X% participation of ZNN holders to take action?

I have to agree with the delegated weight being a stronger/more reliable long term as a means of showing support.

Throwing out an idea here regarding Informational ZIP voting…. Given each Pillar runs their own “BIP process” we can see each Pillar as their own “central editor”… what if the Pillar decides when they Accept an Informational ZIP only. They mark it Accepted after community debate and revision. Then the Pillar asks the community to vote on it which simply gives it “weight”. The more ZNN / Pillar holders that vote YES on it over time gives the ZIP more weight. We can come up with some weight algo that takes into account, Pillars, Delegators, Sentinals, and ZNN holders.

Thoughts? This would apply to informational ZIPs only. Also, that would allow us to publish some NOW without the voting tool being ready now.

Yeah thats fair, but there was some clear communication on this task so imo its hard to ignore it.

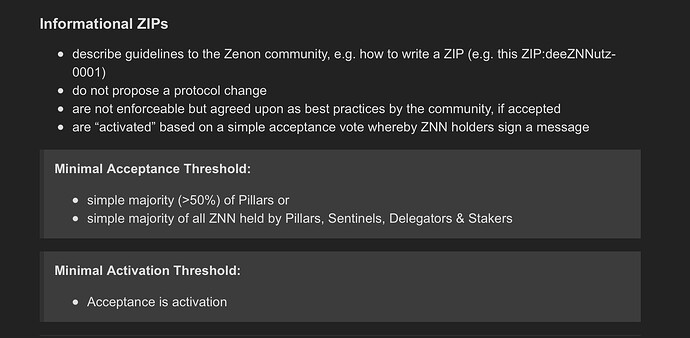

Given the currently relatively low engagement and the fact that informational ZIPs can easily be amended or changed I’d agree with an approach that reduces the bar for acceptance/activation of these kind of zips.

However, I doubt people will have any inclination to vote once a ZIP has been deemed “accepted” unless it’s very easy to do and they’re incentivized to.

On the other hand, if the acceptance threshold is too low, they might even refuse to accept it as a legitimately “voted in” ZIP and simply ignore it.

Since this ZIP potentially defines the general process of any future ZIPs it would be good to have sufficient consensus to prevent it being continuously challenged and ending up in a messy, non-standardized approach to ZIPs.

Just some thoughts

I don’t know if I am sold on delegated weight = voting power at this stage. I was hoping to see some of this when AZ rolled out and was initially disappointed but in retrospect I think the team built AZ approval process to meet the community / pillars where they are - not where they want them to be for the simple goal of not stalling. Also, I am very skeptical that we will ever reach a point where people give up profits because a pillar didn’t vote to their liking (this is just my personal view on human nature). I fully agree the more complex we make this the less people will engage with it. To Dumerils point (I think) we don’t need to make it perfect, just be good enough to move forward and learn from.

I think I agree. I don’t think we are ready for delegated weight.

Maybe a modified delegated weight. I am a little concerned we can get 50% of all the online pillars to do anything. Remember the last upgrade? A very large number of Pillars were forced offline somehow in order to upgrade.

I realize this post probably isn’t that helpful… but in general I think a lot of this could be MVP1 MVP2 type thing. Sponsoring pillar is an interesting idea but by no means required for MVP1 to work as an example.

What is MVP1?

Maybe we need another block 27070 to knock everyone out so we can get a sense of how many are actually monitoring their pillars ![]()

Agile development / story mapping technique. MVP1 minimum viable product to deliver some increment of value and open the feedback loop - MVP2 next highest feature request and so on

I am going to collate some thoughts here:

What is the deal with the spork address?

Can we get someone to look through the code and do some detailed research on how this works?

I feel like we are missing something.

Mr Kaine said everything is already in place for the community to build, so maybe we are just assuming the devs are in control of that address.

Maybe they are and maybe that changes in Phase 1.

Personally I think they have a user activation method for sporks planned or already in place.

They have stated in the light paper their goal is for a network that has no single points of failure.

I think part of their emphasis on users running nodes is because that makes them sovereign network participants, I think the governance consensus is based on this.

So the next thought is, embedded smart contract calls.

I’d like to know more about the scope and scale. Do we envision a time where there may be hundreds or thousands of these functions implemented at the protocol level? Or are they reserved for specific scenarios?

What are the performance implications? Could a function that behaves unexpectedly cause problems for the broader network or are they encapsulated?

Could it be that the reason why the nodes are crashing or running up high RAM usage after some time is because an embedded function is causing this?

I think protocol level functions are very cool, in terms of security and performance, but why should the community get to vote if it gets implemented? That seems like unnecessary bureaucracy, unless we have a ceiling on the amount of functions that can run embedded.

What we really need is a test net. If somebody can present their function working and not breaking on a test net there should be no need to go through the lengthy process of zips and voting. We need to make sure we don’t create voting and governance fatigue.

I think we should try to stream-line the process for technical improvement proposals and additions.

If it doesn’t change fundamentals and does not interfere with existing functionality and can be shown to work on a test-net - why create roadblocks?

If somebody proposes a ZIP that wants to change fundamental constants then we can debate that and have votes and safeguards and all of that.

But if somebody just wants to implement some functions for the Dex they are building do we really need all this formality?

Anyway that’s just a few thoughts.

Apart from the Pointer Repo and the consensus thresholds, the process described above has been pretty much tried and tested with BIPs. It’s just explained in more detail…

We initially tried to account for potentially low voting participation by leaving it up to the ZIP authors to specify consensus thresholds on a ZIP by ZIP basis. But some people disliked the idea.

I am still in favor of this since it provides the necessary flexibility and prevents the framework from being too rigid.

To prevent misuse, participation thresholds can be set - the lower the necessary quorum the higher the necessary participation threshold, for instance.

BIP process specification also does not impose any consensus thresholds btw.

If we kept that flexibility, the MVP 1- n approach would be possible. After all this informational ZIP isnt enforceable anyway. It’s just a document we can vote on to say we have found an agreement on the standard procedure to do these things.

I would probably mention in the ZIP type description that these thresholds are just recommendations and not mandatory for ZIP authors/sponsors.

There’s a thread somewhere here where I explained how sporks work. The address is defined with genesis. Check out zenon/zenon.go, zenon/config.go and chain/store/gensesis.go.

I think one idea with embedded contracts is exactly to prevent broken contracts from being executed; i.e. it’s safe environment by definition where only known code with known requirements is executed. As long as there’s no defect in those of course. But that’s relatively easy to monitor, compared to potential user level contracts.

I wonder though what the scope is that was intended for the embedded contracts.

On the one hand we will have SC runtimes for user level at some point and then we also have uni-kernals for zApp runtimes.

So in the long run where does that leave embedded contracts ? Considering they require sporks to integrate and be used they will need a zip. I think we need a sub-process that deals entirely just with embedded contracts.

I think the embedded contract may also be used to configure the unikernal

I think unikernel and smart contract runtime (vm) would be mutually exclusive concepts. Zapps would run on the scr, whether that be unikernel tech or wasm or anything else.

Or why do you think unikernels are a distinct concept?

The distinction I make in my head between embedded and non-embedded smart contracts is that the former implements functionality that’s not absolutely necessary for the p2p tx routing and consensus core, but still somewhat protocol level extensions of that. You could say, looking at the network, these contracts implement defining characteristics of zenon, but they are still extensions beyond the pure ledger.

External smart contracts would not have that characteristics; they are user level applications.

There should rarely be the requirement to add another embedded contract. If possible to implement in “user space”, it should never be done in “kernel space”.

As you hinted at, there’s a big risk involved with these contracts. They are not running in any sort of isolated environment, unlike the potential external smart contract.

I’m not sure if it would be possible to elevate one or the other existing ecs to external contracts at all, but one reason they exist in the first place is probably the simple fact that you don’t need to adapt a vm to implement and run them. Functionally, they could have been bound more directly into the core code, so I guess it was at least in parts an architectural decision of system design to add this concept of embedded vm. And a nice one.

Following that thought I agree with you when you think ecs should be treat separately. It’s probably a good idea to maintain the structure they gave to the code in every process that’s being designed on top.

I’m not sure, but I think part of the reason for unikernals is to fragment global state for all zApps. Like with EVM there is just one monolithic state, so maybe unikernals is a kind of sharding for global state.

And maybe they can act as Oracles of sorts too.

I think it said in the whitepaper that a special transaction can be sent to a unikernal to configure it.

That would probably happen with an embedded contract. Maybe in this way the unikernals can be customized, permissions and runtimes set and even whole programs loaded.

Not to go off-topic, but I was curious what you guys think about this project’s upgrade process since they have some similarities to Zenon:

https://medium.com/koinosnetwork/the-worlds-simplest-dao-d6c1f65f8292

I had a quick read and it sounds not so different from what we have. As I understand it, their system is based on sporks as well (which can be both hard- an soft fork in traditional semantics), but the activation method is not fixed and limited to an address hard coded with network inception, but (probably) configured with each individual proposal? I think it’s not stated explicitly in that article, but that is how I understand it.

That’s a nice twist as well; each spork can be activated by the proposing address or optionally a different one, if configured so.

Regarding votes, they seem to be very optimistic: each non-vote is a no-vote.